|

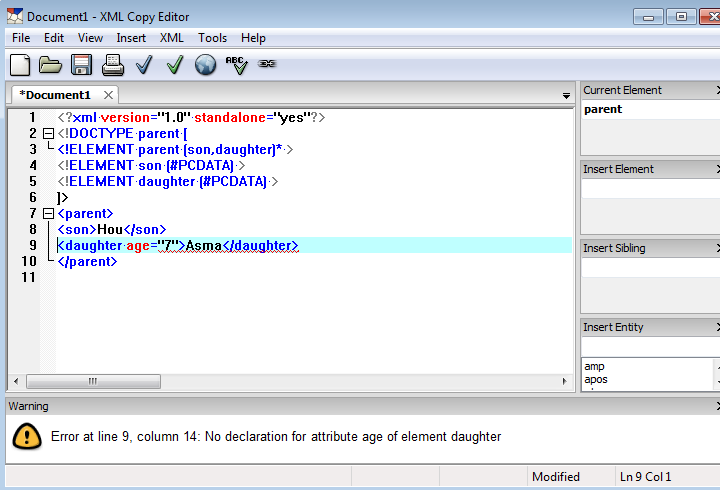

Sometimes you will want to extract all the text in the PDF. You will most likely need to use Google and StackOverflow to figure out how to use PDFMiner effectively outside of what is covered in this chapter. The documentation on PDFMiner is rather poor at best. If you want to install PDFMiner for Python 3 (which is what you should probably be doing), then you have to do the install like this: The directions for installing PDFMiner are out-dated at best. Want to learn more about working with PDFs in Python? Then check out my book: ReportLab: PDF Processing with Python Fortunately, there is a fork of PDFMiner called PDFMiner.six that works exactly the same. PDFMiner is not compatible with Python 3. For Python 2.4 – 2.7, you can refer to the following websites for additional information on PDFMiner: In fact, PDFMiner can tell you the exact location of the text on the page as well as father information about fonts. It’s primary purpose is to extract text from a PDF. The PDFMiner package has been around since Python 2.4. Probably the most well known is a package called PDFMiner.

Let’s get started by learning how to extract text! Extracting Text with PDFMiner Once we have extracted the data we want, we will also look at how we can take that data and export it in a different format. While there is no complete solution for these tasks in Python, you should be able to use the information herein to get you started.

We will also learn how to extract some images from PDFs. In this chapter, we will look at a variety of different packages that you can use to extract text. Unfortunately, there aren’t a lot of Python packages that do the extraction part very well. Yeah that's how i would do it i skip the trigger idea, and would have a job that runs every x minutes, that compares rows that have been processed or not.Įither a flag in the existing row of data to indicate that it was processed, or a seperate table, which has the PK of the row from the original table for items it has processed.that way you can join the "processed" table to the original for rows that are new.ĭoes your blob data ever get UPDATED? in that case, i'd use a trigger, but not for just processing new inserts.There are many times where you will want to extract data from a PDF and export it in a different format using Python. I imagine to have a flag in table to indicate whether a row is extracted, so when a new row arrives, then the BLOB objects embeded in the row need to be extracted and transfer to another file server, and a flag in the table will be set to Y, so the same row doesn't get extracted multiple times Receive means a new row of BLOB objects arrive at receiver SQL Server Database, how to check that? is it best to use trigger event or build a stored procedure set as scheduled job to check that. SELECT = rawimage FROM myImages WHERE id = 1 assumes table and the file from the example above for dbo.MFGetFileImage exists already. Parameters: purpose: given an varbinary image column in a table, write that image to disk SELECT 1,'fedora_spinner.gif',dbo.MFGetFileImage('C:\Data\','fedora_spinner.gif' ) INSERT INTO myImages(id,filename,rawimage) Parameters: purpose: given a path and filename, return the varbinary of the file to store in the database.ĬREATE TABLE myImages(id int,filename varchar(200),rawimage varbinary(max) ) Here's a code snippet demoing both upload to disk and extraction from disk: It took me like two minutes to install total. That CLR project has functions for both the isnert and the extraction of BLOB fields(varbinary and image) SQL server is designed to fiddle with objects INSIDE a database, and as soon as you want to step outside of that context(to disk), it's better to use a programming language, as that's it's forte.Įlliot Whitlow, who is a heavy contributer here on SSC as well, has a really nice CLR project that i've adopted: File operations directly from TSQL are a pain.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed